Expert Telecom Maintenance Services Atlanta

A network problem rarely announces itself at a convenient time. In Atlanta, it usually shows up when a clinic is full, a campus is in session, a warehouse is shipping, or a high-rise tenant is trying to get through a voice call that suddenly sounds like it’s underwater. At that point, “the phones are down” or “the Wi-Fi is unstable” isn’t a small IT issue. It’s an operations issue, a compliance issue, and sometimes a patient-care issue.

That’s why serious telecom maintenance services Atlanta buyers don’t treat cabling, fiber, DAS, PBX, and network edge hardware as background utilities. They treat them like production infrastructure. If your building depends on voice, wireless coverage, cloud applications, badge access, cameras, or connected lab and medical devices, then maintenance isn’t optional. It’s the discipline that keeps minor defects from turning into outages, finger-pointing, and rushed replacement projects.

Your Atlanta Business Runs on Connectivity Are You Protecting It

At 8:15 on a Monday, a Buckhead medical office can look fully operational while one weak closet switch, a mislabeled patch panel, or a failing fiber termination is already slowing check-in, voice traffic, and cloud access. The outage has not happened yet. The business impact has.

That is the operating reality for Atlanta businesses. Dense multi-tenant buildings, older risers, ongoing office reconfigurations, hybrid voice environments, in-building wireless demands, and constant moves, adds, and changes put steady pressure on telecom systems. Healthcare groups, universities, research facilities, logistics operators, and law firms all depend on the same thing. Stable connectivity at the edge, not just bandwidth from the carrier.

A lot of companies assume strong carrier availability reduces maintenance risk. It does not. Fast transport to the building does nothing for a bad cross-connect, overheated IDF, loose SFP, neglected UPS battery, or undocumented change inside the premises. The practical question is whether your team can find and fix weak points before users open tickets, departments lose hours, or leadership approves an emergency replacement at the worst possible time.

Good telecom maintenance protects more than uptime. It preserves documentation, keeps support boundaries clear between carrier and customer equipment, and gives IT and facilities a usable record of what is installed, what is aging out, and what should be retired instead of patched again. For organizations comparing staffing models, this managed network services guide is useful background, but the decision still comes down to response time, site complexity, and whether the provider can support the full lifecycle of the equipment in your racks and closets.

That full lifecycle matters in regulated Atlanta environments. A switch, firewall, PBX appliance, or wireless controller leaving service may still hold credentials, logs, call data, or configuration files. Any maintenance plan tied to upgrades should include documented data security and sanitization practices so retired hardware does not become a compliance problem during disposal.

Field reality is simple. Many telecom failures start as maintenance failures, then turn into security, compliance, and budget failures once the hardware is replaced without a clean decommissioning plan.

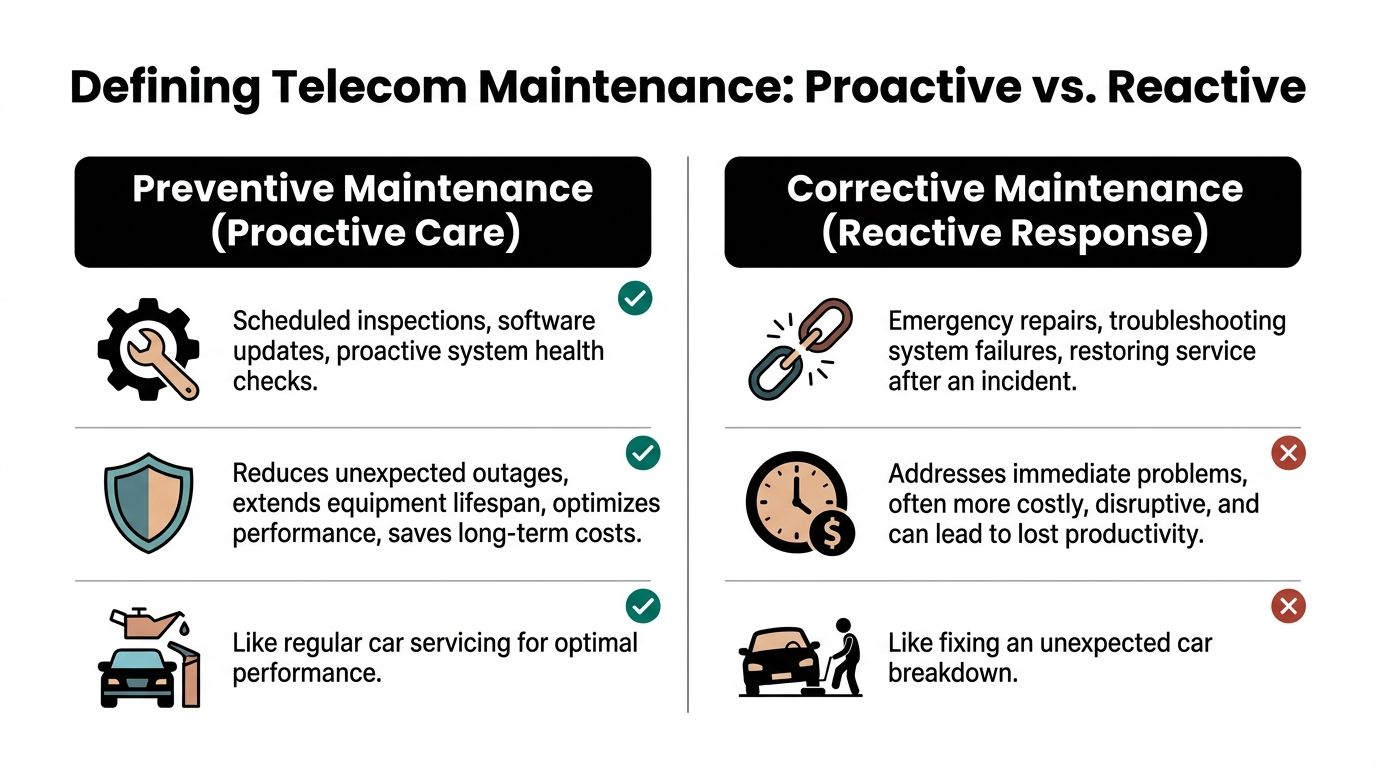

Defining Telecom Maintenance Preventive vs Corrective Care

A good way to explain telecom maintenance is to compare it to fleet maintenance on a high-performance vehicle. If you wait for smoke, noise, or a roadside breakdown, you’re paying for failure. If you service the vehicle on schedule, inspect wear points, and watch the dashboard indicators, you usually avoid the expensive event.

That same split exists in telecom. Preventive maintenance is the planned work that reduces the odds of failure. Corrective maintenance is the work you do after service is already impaired. Most Atlanta organizations need both, but the balance matters. Heavy dependence on corrective work almost always means too little visibility, weak documentation, or no lifecycle discipline.

What preventive care actually looks like

Preventive telecom maintenance isn’t one task. It’s a repeatable operating habit.

- Physical inspections: Technicians inspect racks, patch fields, grounding, cable management, power paths, fan status, connector condition, and signs of heat or contamination.

- Software and firmware review: Teams validate versions, close known bugs where appropriate, and avoid the common mistake of applying updates without a rollback plan.

- Performance baselining: Monitoring establishes what “normal” looks like for signal levels, call quality, interface behavior, and wireless coverage.

- Documentation cleanup: Port maps, fiber paths, cross-connect records, and device inventories get updated before someone has to troubleshoot under pressure.

When preventive work is done well, corrective calls become narrower and faster. The team already knows the environment. They know where the risers go, which closet was modified last quarter, which gateway has a history of power issues, and which fiber route needs special handling.

Corrective care is necessary, but it’s expensive

Corrective maintenance is still essential. Hardware fails. Carriers have issues. Tenants make unauthorized changes. Renovation crews nick pathways and nobody reports it until packet loss shows up.

The problem is cost and disruption. Bain notes that field operations are a primary cost center in telecom, and U.S. telecom operators are projected to cut $28 billion in costs by 2028 through AI-powered predictive maintenance and self-healing networks that reduce truck rolls and improve reliability, according to Bain’s analysis of the telecom cost-cutting imperative. The lesson for Atlanta businesses is simple. Every avoidable dispatch, rushed parts order, and after-hours emergency has a real operational cost, even before you count user downtime.

For buyers comparing support models, a broader operational framework is helpful. A practical reference is this managed network services guide, which outlines how organizations shift from ad hoc fixes to structured oversight. The same logic applies to telecom maintenance contracts.

Remote monitoring changes the equation

Remote monitoring is the closest thing telecom has to an onboard diagnostic system. It doesn’t replace field work, but it does let teams spot drift before users complain.

What works:

- Watching trends, not just alarms

- Tagging recurring incidents to a physical location or hardware family

- Pairing monitoring with accurate asset records

- Escalating on symptoms that repeat, even when they clear on their own

What doesn’t work:

- Alerting on everything

- Ignoring low-grade anomalies because service “came back”

- Treating remote tools as a substitute for testing, labeling, and inspections

A lot of preventable pain starts when a team believes visibility is the same thing as control. It isn’t. Visibility tells you where to look. Maintenance is what keeps the problem from returning. If you’re evaluating what disciplined handling looks like from intake to final disposition, a documented service workflow is a useful benchmark.

Don’t confuse “up” with “healthy.” A system can still pass traffic while its margins are collapsing.

A Deep Dive into Atlanta Telecom Service Specialties

A typical Atlanta service call is not one problem in one closet. It is a fiber handoff issue on one floor, a dead zone complaint in a stairwell, intermittent Wi-Fi roaming on another tenant level, and a legacy voice gateway still carrying traffic because the migration never fully finished. Maintenance planning has to match that reality.

Atlanta properties also have a local twist. High-rises, medical campuses, mixed-use developments, research facilities, and older institutional buildings often carry several generations of telecom infrastructure at once. A provider may be excellent at carrier demarc testing and still struggle inside the building. Another may know cabling well but miss RF drift, voice survivability issues, or the documentation needed when equipment is retired.

Fiber optic maintenance

Fiber rarely gives a clean failure. More often, performance slips first. Users report intermittent errors, uplinks flap under load, or a link goes unstable after nearby construction, cabinet work, or a rushed patching change.

Good fiber maintenance stays disciplined:

- OTDR testing to pinpoint breaks, reflections, and loss events

- Connector inspection and cleaning to remove contamination before it becomes chronic loss

- Splice review to catch poor workmanship on backbone segments and handoffs

- Pathway verification so records match the actual route in the riser, tray, or conduit

In the field, the recurring issue is usually not the glass alone. It is unlabeled jumpers, crowded panels, old records, and change work done without closeout updates. That is why a repair that looked finished on Tuesday turns into another outage two weeks later.

DAS and in-building wireless

In-building wireless support is a different discipline from outside plant or structured cabling. Atlanta hospitals, government sites, dense office towers, and concrete-heavy interiors need periodic RF validation because tenant changes, remodels, shielding materials, and equipment additions all affect coverage.

For DAS and public safety systems, maintenance should include re-testing after physical changes, checking passive components and backhaul health, and confirming that compliance records still match the live system. I see buildings get into trouble when the original acceptance test becomes the only real test anyone can find. Years later, the system is technically installed but no longer performing the way the owner assumes.

A practical maintenance table helps separate routine checks from failure chasing:

| Maintenance concern | What strong teams do |

|---|---|

| Coverage gaps | Re-test after remodels, tenant changes, and material changes that affect RF |

| Public safety readiness | Review inspection records, pathways, battery backup, and responder radio performance |

| Component drift | Inspect connectors, amplifiers, remotes, and backhaul links for gradual degradation |

| Peak occupancy issues | Test during realistic building use, not only in off-hours |

Enterprise Wi-Fi and user experience

Wi-Fi complaints usually arrive as business complaints first. Clinicians lose session continuity, researchers cannot stay attached while moving between spaces, guests fail onboarding, or staff keep reconnecting in the same wing every afternoon.

Maintenance work here involves more than replacing access points. Teams need post-change validation, controller and switch review, channel and power adjustments, authentication testing, and coverage checks after occupancy or layout changes. Guest access deserves special attention because it touches security policy, user friction, and brand experience at the same time. If you are comparing approaches to onboarding, segmentation, and visitor access controls, this overview of secure guest Wi-Fi and authentication is useful context.

A wireless ticket often starts with "slow internet" and ends with a floor plan change, a new imaging device, or an access rule no one documented.

Voice systems and legacy platforms

Voice still carries real operational risk in Atlanta environments that depend on emergency calling, paging, contact centers, nurse call adjacency, elevators, or campus notifications. Many sites are in a hybrid state. SIP is live at the edge, but older shelves, gateways, or analog dependencies still sit in the path.

That mix creates maintenance problems a cloud-only team can miss. Common examples include QoS errors affecting call quality, VLAN mistakes that break voice paths, aging power supplies, PRI or gateway issues left behind during migration, and call logs that were never exported before a platform was shut down.

The bigger operational mistake is treating retirement as someone else’s job. Once switches, PBX hardware, optics, controllers, and appliances come out of service, they still carry configuration data, credentials, call records, and asset-tracking obligations. Maintenance planning should account for that end-of-life work early, including data center equipment recycling for retired telecom and network hardware, so old gear does not sit in a staging room without custody records, destruction planning, or a clear disposal path.

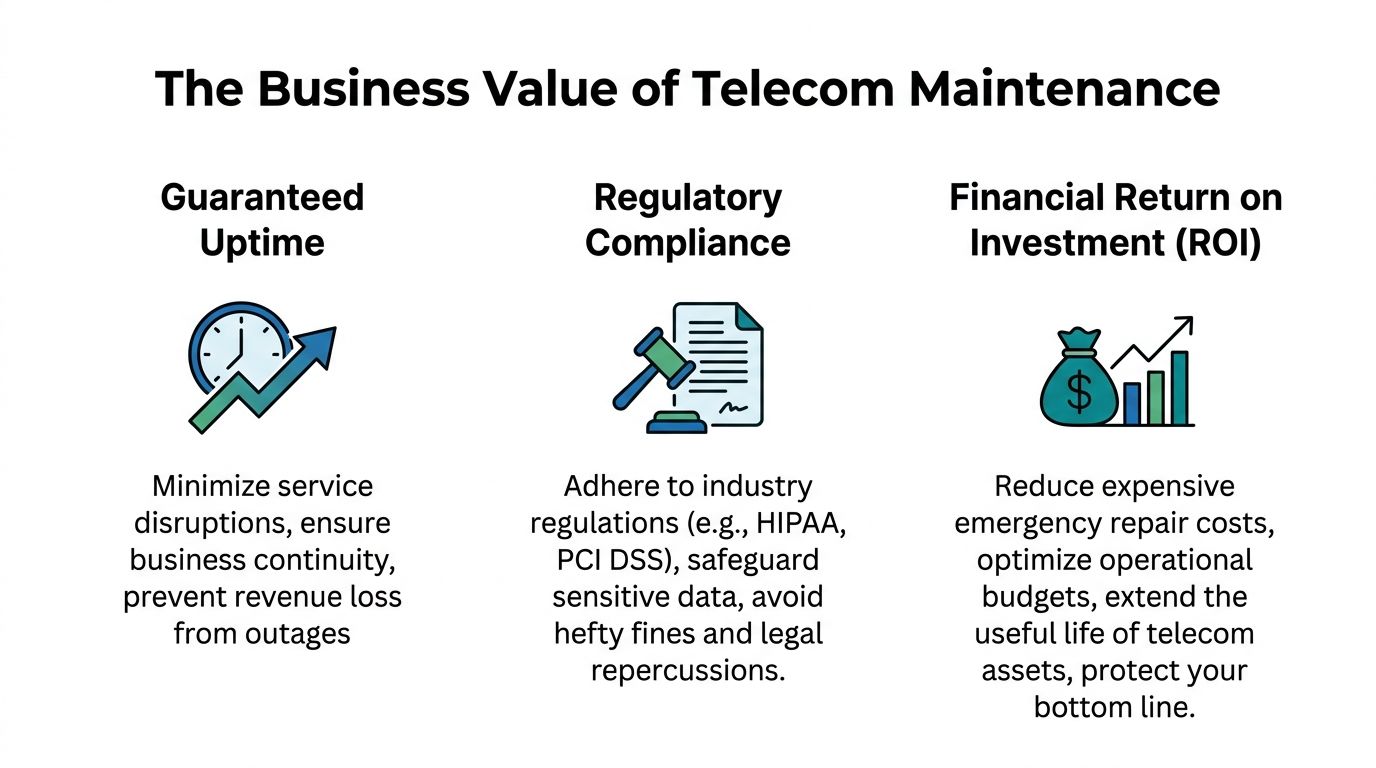

The Business Case Uptime Compliance and Financial ROI

A telecom maintenance budget earns its keep when it prevents business interruption, stands up to an audit, and keeps labor costs under control. In Atlanta, those three outcomes are tied together more tightly than many buyers expect.

Uptime protects revenue and daily operations

A clinic opens with one IDF closet half down. The Wi-Fi signal is weak in exam rooms, VoIP handsets start re-registering, and staff fall back to personal phones and paper notes. Nobody calls that a telecom issue by noon. It becomes a patient flow problem, a billing problem, and a leadership problem.

That is the essential return on maintenance. Stable connectivity keeps departments working the way the business was designed to work. It also cuts the hidden cost of workarounds, repeat tickets, and emergency dispatches that usually cost more than routine preventive care.

For multi-site Atlanta organizations, the financial impact is rarely isolated to one room or one rack. A single neglected switch stack, battery backup, fiber handoff, or controller can affect scheduling, access control, guest services, call handling, and cloud application performance across multiple locations. Strong providers do more than restore service. They correct the underlying fault, document the change, and verify that the fix holds under normal production use.

Compliance depends on evidence

In regulated environments, maintenance work is only half the job. The other half is proving what was serviced, what changed, who touched it, and what happened when equipment left production.

That matters in healthcare, research, higher education, and government sites around Atlanta. Auditors and internal compliance teams do not accept "we fixed it" as a control. They look for service logs, test results, access records, chain-of-custody details, and retirement records for equipment that may hold credentials, call history, configuration files, or user data. A provider that cannot produce those records creates risk even if the technical repair was sound.

I see this gap often during upgrades. Teams budget for replacement hardware and installation, but not for the documentation and disposition work that closes the loop. If retired telecom gear is sitting in a closet with old configs still on it, the maintenance program is incomplete. Budgeting for IT asset disposal services for retired network and telecom equipment at the same time as support and refresh work usually costs less than cleaning up custody, data handling, and e-waste problems later.

The labor equation affects ROI

Building every telecom specialty in-house is expensive, especially in environments that mix fiber, structured cabling, RF, carrier circuits, voice, paging, and legacy hardware. As noted earlier, telecom labor is specialized work, and Atlanta rates for experienced field technicians and niche platform support are not cheap.

The practical question is not whether internal IT is valuable. It is where internal time produces the best return. Internal teams usually add the most value when they own standards, vendor oversight, change approval, and business alignment. Field troubleshooting, cable-path investigation, patching errors, hardware swaps, RF testing, and after-hours response are often better handled by technicians who do that work every day.

That division of labor improves speed and lowers waste.

Operational test: If your team spends more time hunting for diagrams, passwords, and service histories than resolving the actual fault, maintenance is not under control. The business is paying for disorder.

Understanding Service Agreements and Pricing Models

Most frustration with telecom maintenance contracts starts before the first ticket is opened. The scope is vague, the response language is soft, and the buyer assumes “support” means more than it provides.

Three pricing models show up most often in Atlanta telecom maintenance services.

Time and materials

This is the pure reactive model. You call when something breaks, the provider dispatches, and you pay for labor, travel, and parts as needed.

It works best for:

- Small sites with simple environments

- One-off remediation after a move, outage, or construction incident

- Organizations that already have strong internal telecom oversight

It works poorly when:

- The site has chronic issues

- The environment includes DAS, legacy voice, or multiple campuses

- You need predictable budgeting

Block hours

Block-hour retainers sit in the middle. You pre-purchase access to skilled labor and use it for inspections, remediation, documentation updates, and small projects.

Value isn’t just discounted time. It’s continuity. The same technicians get familiar with your risers, closets, racks, RF trouble spots, and historical issues. That usually shortens future incidents.

A buyer should still ask hard questions:

- Which work consumes hours and which work is excluded?

- Do remote diagnostics count the same as field dispatch?

- Do after-hours calls burn hours differently?

- What happens to unused hours?

Managed services agreements

This is the most mature model. A managed agreement usually combines scheduled preventive work, monitoring, dispatch rules, escalation paths, and documented reporting.

The best MSAs are specific. They define:

- Covered systems

- Inspection cadence

- Escalation contacts

- Reporting format

- Parts handling

- Change documentation expectations

- Decommissioning support boundaries

The weak ones hide behind broad phrases like “best effort support.”

Read the SLA like a field manager

A service level agreement should tell you exactly how a provider behaves when your site has a problem.

Use this quick comparison when reviewing proposals:

| SLA term | What it should mean in practice | What to watch for |

|---|---|---|

| Response time | How fast the provider acknowledges and starts action | “Response” that only means an email reply |

| On-site dispatch | When a technician is actually en route or arriving | No distinction between remote triage and arrival |

| Resolution time | Expected window to restore service or implement workaround | No carve-out for parts delays or carrier dependencies |

| Coverage window | Business hours, after-hours, holidays | Hidden premiums and exclusions |

| Exclusions | What the contract doesn’t cover | Cabling, power, or legacy gear quietly excluded |

A good contract also spells out ownership boundaries. If a carrier circuit is down, who opens the case. If a tenant contractor damages cabling, who documents it. If a voice platform is retired, who exports records first. Those details matter more than glossy uptime language.

The Ultimate Atlanta Provider Selection Checklist

A provider interview usually sounds good until the first outage hits. The true test comes at 2 a.m. when a failed uplink affects phones, badge access, Wi-Fi calling, or nurse call traffic, and your team needs a technician who already knows how to get into the building, who to call, what gear is on site, and how to document the work for IT and compliance.

Provider selection in Atlanta is operational first. High-rise offices, hospital campuses, research buildings, mixed-use properties, and older facilities all create different access and service constraints. A firm can have capable engineers and still underperform if dispatch depends on subcontractors, if parking and loading procedures are unclear, or if after-hours entry turns every service call into a delay.

Start by testing local fit. Ask who covers your sites, who carries badges or escort approval, how they handle dock scheduling, and whether the same team that troubleshoots the fault also closes out the documentation. Those details separate a true service partner from a name on a proposal.

Then press on the work itself:

- Building access and dispatch: How do they handle secure buildings, campus permits, badging, and weekend callouts in Midtown, Buckhead, Perimeter, and medical corridors?

- Fiber and physical layer work: Can they test, inspect, label, and verify routes cleanly, then hand over records your internal team can use?

- Voice and hybrid systems: Can they support aging PBX, gateway, or mixed analog and VoIP environments while a migration is still in progress?

- Regulated environments: What is their method for working in patient-care spaces, labs, clean areas, and restricted telecom rooms without creating a documentation problem?

- Asset tracking: When hardware is swapped, do they record serials, port assignments, and disposition status, or does removed equipment disappear into a back room?

Legacy support still matters in Atlanta. Plenty of organizations have one old voice shelf, gateway, paging controller, or specialty circuit that nobody wants to touch until it fails. A strong provider can keep that equipment stable long enough to support a planned transition, export what needs to be retained, and avoid turning a maintenance visit into an unplanned capital project.

That lifecycle piece gets missed too often.

If your environment includes labs, clinics, or research operations, ask how the provider coordinates with teams handling adjacent infrastructure and retired technical assets. Telecom gear often leaves service during larger refreshes, so the maintenance vendor should be able to support inventories, chain-of-custody records, and handoff requirements alongside programs for lab equipment removal and disposition planning.

Use this shortlist before you sign:

| Checklist area | What to ask |

|---|---|

| Atlanta coverage model | Which technicians are local, which work is subcontracted, and who owns escalation after hours |

| Technical range | Who handles fiber, DAS, cabling, voice, and low-voltage troubleshooting in the field |

| Site discipline | How the team works in hospitals, labs, campuses, and secure commercial properties |

| Closeout quality | Whether they provide redacted examples of test results, labels, service notes, and asset records |

| Legacy transition ability | How they maintain aging systems while planning cutover, record retention, and retirement |

| End-of-life handling | What happens to removed hardware, who documents custody, and where decommissioning responsibility starts and stops |

If your team is also comparing broader voice and telecom vendors, this overview on understanding business communication providers helps frame what different provider types own, and what they don’t.

Ask one final question before award: “Show a redacted ticket where a difficult field issue went from first report to final documentation, including any removed hardware.” Good providers can produce that quickly. Weak ones talk around it.

Beyond Maintenance Secure Decommissioning and E-Waste

Most telecom guides stop at uptime. That’s incomplete. Every maintenance program eventually creates retired switches, routers, access points, optics, controllers, PBX shelves, UPS components, phones, and cabling. If those assets leave service without secure handling, the maintenance program ends with a compliance gap.

That risk is easy to underestimate. A discarded network appliance may still contain configuration files, stored credentials, call history, access logs, or management data. A voice system pulled from a clinic may still hold records that should have been exported and wiped before removal. A stack of “old gear” sitting in a staging room is often just unsecured data with handles.

The overlooked liability gap

A critical underserved issue appears when lab or medical equipment depends on a telecom network and only the end device is included in disposal planning. Hospitals and research facilities face undocumented risk if the network infrastructure supporting retired devices isn’t also securely decommissioned. Complete compliance requires an audit trail showing secure removal and data deletion for both the end device and its network connection, as explained in this discussion of the telecom-related decommissioning liability gap.

That matters in practice because decommissioning rarely happens one asset at a time. It happens during renovations, lab closures, departmental moves, equipment upgrades, and site consolidations. Under those conditions, teams focus on getting rooms cleared and projects finished. Records are where mistakes happen.

What a clean end-of-life process looks like

Strong decommissioning practice usually includes:

- Asset identification: Match each removed telecom component to an inventory or removal list.

- Data handling: Wipe or destroy storage-bearing components before final disposition.

- Chain of custody: Record who removed it, where it went, and when control changed hands.

- Environmental handling: Route hardware through certified recycling rather than informal discard channels.

- Documentation: Keep certificates, pickup records, internal approvals, and system retirement notes together.

For lab-heavy and regulated environments, telecom retirement should be tied to the broader facility shutdown or equipment removal process. That’s especially true when analyzers, incubators, imaging support systems, or environmental controls were network-connected. Teams planning that kind of coordinated shutdown should align telecom removals with lab equipment disposition workflows.

Maintenance keeps your systems stable today. Secure decommissioning keeps yesterday’s systems from becoming tomorrow’s incident.

If your Atlanta organization is retiring lab equipment, servers, storage, or network-connected electronics as part of a telecom upgrade, renovation, or facility shutdown, Scientific Equipment Disposal can help close the loop. Their team supports on-site pickup, de-installation logistics, compliant recycling, and data sanitization for regulated environments that can’t afford loose ends in the asset lifecycle.